GLSL Tutorial – Lighting

| Prev: Color Example | Next: Dir Lights per Vertex I |

Lighting is essential in computer graphics. Scenes without lighting seem too flat, making it hard to perceive the shape of objects. In here we will explore the basic lighting and shading models.

A lighting model determines how light is reflected on a particular point. The perceived colour on that point depends on a number of parameters, for instance, the light direction, the viewer direction, the properties of the material, to name a few.

A shading model is related to how lighting models are used to lit a surface. For instance, we can compute a single colour value per triangle, Flat shading; compute the colour for the vertices of a triangle and interpolate the colour values for points inside the triangle, Gouraud shading; or even compute the colour for every surface point, Phong shading.

Lighting is deeply related to colour. When an object is lit we observe colour, otherwise, if no light reaches an object looks completely black.

Colour in CG is composed of several terms, namely:

- diffuse: light reflected by an object in every direction. This is what we commonly call the colour of an object.

- ambient: used to simulate bounced lighting. It fills the areas where direct light can’t be found, thereby preventing those areas from becoming too dark. Commonly this value is proportional to the diffuse colour.

- specular: this is light that gets reflected more strongly in a particular direction, commonly in the reflection of the light direction vector around the surface’s normal. This colour is not related to the diffuse colour.

- emissive: the object itself emits light.

The next figure shows the effect the first three color component when an object is lit. To define an object’s material we define values for each of the above components.

Figure: From left to right: diffuse; ambient; diffuse + ambient; diffuse+ambient+specular

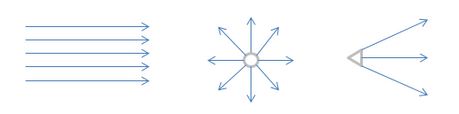

Lights come in many packages as well. The most common light types, and easier to implement, are: directional, point, and spotlights.

In a directional light, we assume that all light rays are parallel, as if the light was placed infinitely far away, and distance implied no attenuation. For instance, for all practical purposes, for an observer on planet earth, the light that arrives from the sun is directional. This implies that the light direction is constant for all vertices and fragments, which makes this the easiest type of light to implement.

Point lights spread their rays in all directions, just like an ordinary lamp, or even the sun if we were to model the solar system.

Spotlights are point lights that only emit light in a particular set of directions. A common approach is to consider that the light volume is a cone, with its apex at the light’s position. Hence, an object will only be lit if it is inside the cone.

Figure: Light types. From left to right: directional, point, and spotlights.

There are many approaches to compute the light that is reflected by an object towards the camera, or eye. To compute this value for a particular point we need to know where does the light come from, what is the angle between the light vector and the surface normal (a vector perpendicular to the surface), where is the camera, and some other settings. The equation that allows us to compute the colour, or reflected light for a particular point dictates which settings will be used and how.

As mentioned before our options are not limited to the lighting equation. Considering a triangle we can compute the lighting for each vertex, and then use interpolation for each fragment inside the triangle. Or we can interpolate all the available vertex data, send it to the fragment shader and then compute the colour per fragment. These shading models are orthogonal to the lighting equations, in the sense that we can combine any equation with any of the above shading models to lit our scene.

When fixed functionality was available, no need to write shaders, lighting was computed per vertex. The colour of each vertex was then send to the rasterization and interpolation phases where the colour was interpolated for each computed fragment. The fixed functionality was an implementation of the Gouraud shading model. The results were not that great since interpolation is not the best way to compute lighting which can vary in a multitude of ways inside a polygon. Imagine a spotlight hitting the centre of a triangle, but none of its vertices. The result would be a unlit triangle, which is clearly incorrect.

Gouraud shading made sense back when we typically had a lot more pixels than vertices, and way less computing power. Nowadays we have a lot more computing power, which in turn allows us to use larger and larger numbers of polygons in a scene. These two features combined with the poor results of lighting per vertex led to the current prevalent trend of performing lighting per pixel, i.e. the Phong shading model.

All the above concentrates on the point being lit, the light sources and the camera position. But what about shadows? That would imply testing if any other object is in the path from the point being lit to each light source. And why can’t other objects act as light sources themselves, reflecting the light that hits them towards our point, thereby contributing to its final colour? And refractions? Lighting is a very complex issue, some may even say that lighting is THE issue in computer graphics.

Anyway, we have to start somewhere, so we are sticking with the simple assumption that we are lighting a point, without considering any interaction with the remaining geometry, only the camera and the light sources. In the next sections we’re going to see how to write shaders to simulate all the above mentioned types of lights, both per vertex and per pixel.

Source code for all the light types, and shading models, is available: L3DLighting. The zip file includes a Visual Studio 2010 project. To try the different light/shading model combinations see function “setupShaders”.

| Prev: Color Example | Next: Dir Lights per Vertex I |

7 Responses to “GLSL Tutorial – Lighting”

Leave a Reply to uag515 Cancel reply

This site uses Akismet to reduce spam. Learn how your comment data is processed.

Thanks for your tutorials. But according to my test, there was some error in your program when I run it.

The case is that when I press the wheel and move the mouse one error occured.

To solve if I try to add some code to yours, and it works.

The job i done is that insert the following code into the cpp file “light.cpp” located in line 220.

The code is

// wheel button: do nothing but return

else if (tracking == 0)

{

return;

}

That is to say that you might forgot to judge the condition that there was a “wheel Event”.

(And Sorry for my English.)

Thanks for the fix.

Hi,

nice tutorials.

I have been fiddling with the source code L3DLighting provided on this page but have been struggling to change between the different shading models on the fly. I’ve compiled and attached all the shaders etc. in shader setup and change shader programs using useProgram(shader1/2/3..) in the render function following a key press. But, something happening following the setBlockUniform calls in the render function messes up the shading models when changing between them e.g. when changing from the pointlight model back to the dirdifambspec model means object will mostly be in the dark for some reason.

Is there something I am missing/doing wrong to be able to switch between the shaders during execution?

First off, thanks for the tutorials. They are very helpful, and I have learned a lot.

I am adapting your L3DLighting example to my own application. The majority of this application is a standard MSVC gui application, but I have one dialog that shows an OpenGL view using freeglut. In my application I need to allow the user to close and open the OpenGL view several times during the execution of the application. When using your code from the L3DLighting example, the scene renders great the first time, but when I close and then re-open the dialog, the scene is empty.

Is there some sort of cleanup that I should be doing to prevent this from happening? I have even completely destructed my dialog and instantiated a new one, and it is still a blank scene during the second time it is opened (the first time is always correct). Thanks.

Hi,

Thanks for the feedback. When you mention closing the window are you actually closing or hiding it? If closing, are you destroying the context?

Thanks for the response. I am using freeglut, and I am closing the window (not hiding it). I imagine that freeglut creates a new context the next time the window is opened, which I assume means that all of my data/shaders are lost. If I hide the window and then show it instead of creating/destroying, will I be able to maintain my same context?

Yes, hiding it should solve the problem.